The McPhail AI Safety Patents Portfolio

The McPhail AI Infrastructure Patents portfolio covers methods, systems, and assessment architectures for human oversight of AI-generated work. The portfolio is anchored in the recognition that as generative AI takes on a growing share of professional output, the bottleneck shifts from production to verification, and the humans positioned to catch AI-generated errors become the critical safety layer in any AI-assisted workflow. Active areas of development include code-generated psychometric assessments that measure human capacity to detect AI errors, calibration-based credentialing for human reviewers, multi-reviewer consensus and routing systems for high-stakes AI outputs, embedded verification gates within AI workflows, distractor analysis methods for instructional improvement, and structured legal document drafting systems. The portfolio's defining principle is that the value of AI infrastructure inventions lies not in replacing human judgment but in measuring, routing, and embedding it where it is most needed.

The accompanying word cloud reflects technical vocabulary associated with the portfolio's current invention set.

Public Disclosures

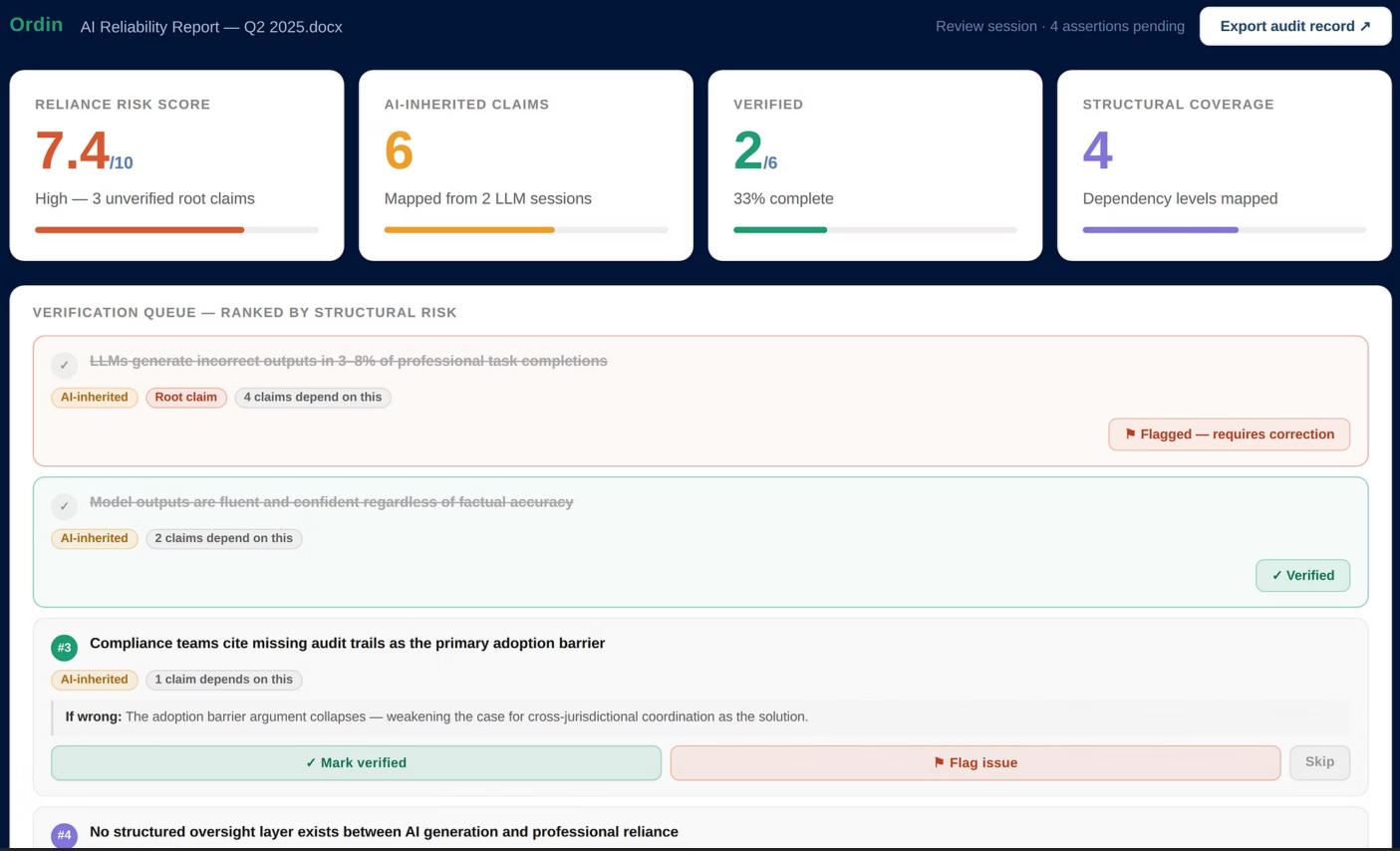

4/1/2026 Ordin: A dependency-linked claim decomposition system for AI-assisted workflow verification

Co-inventor: Aarav Pasad

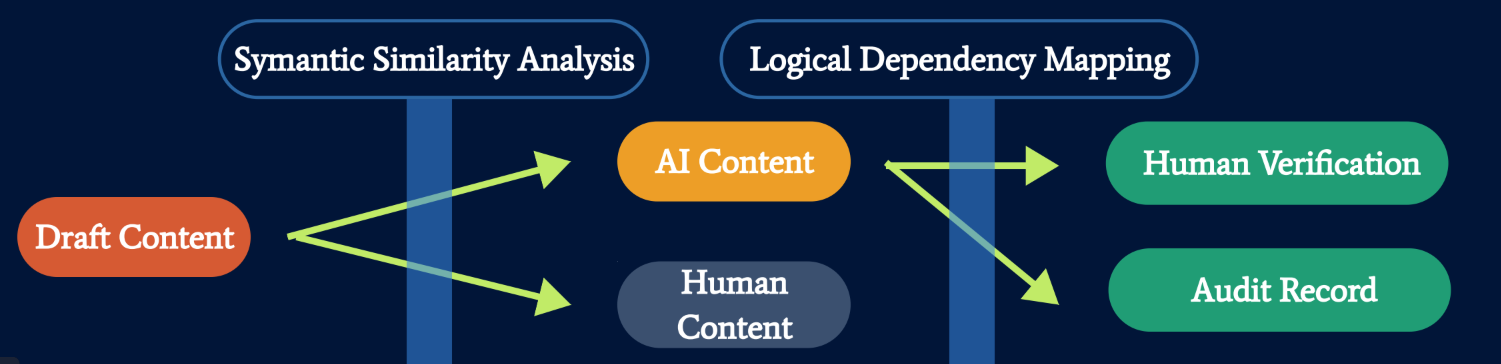

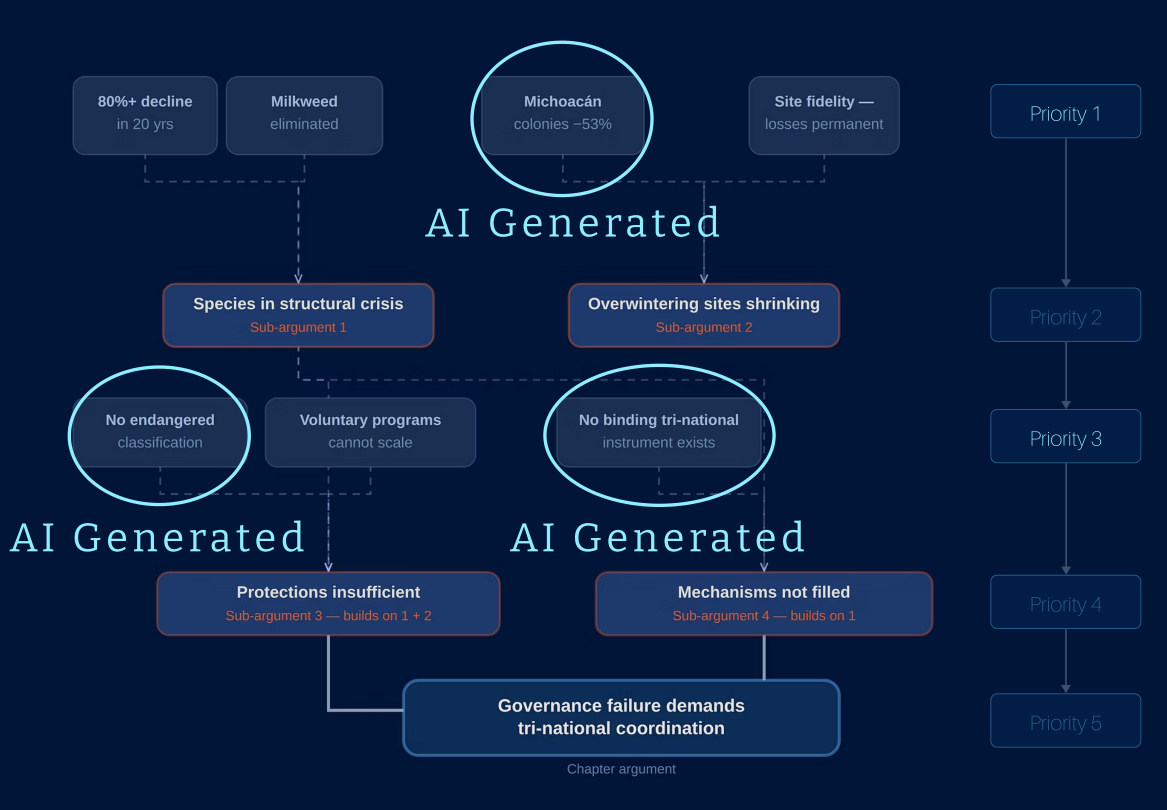

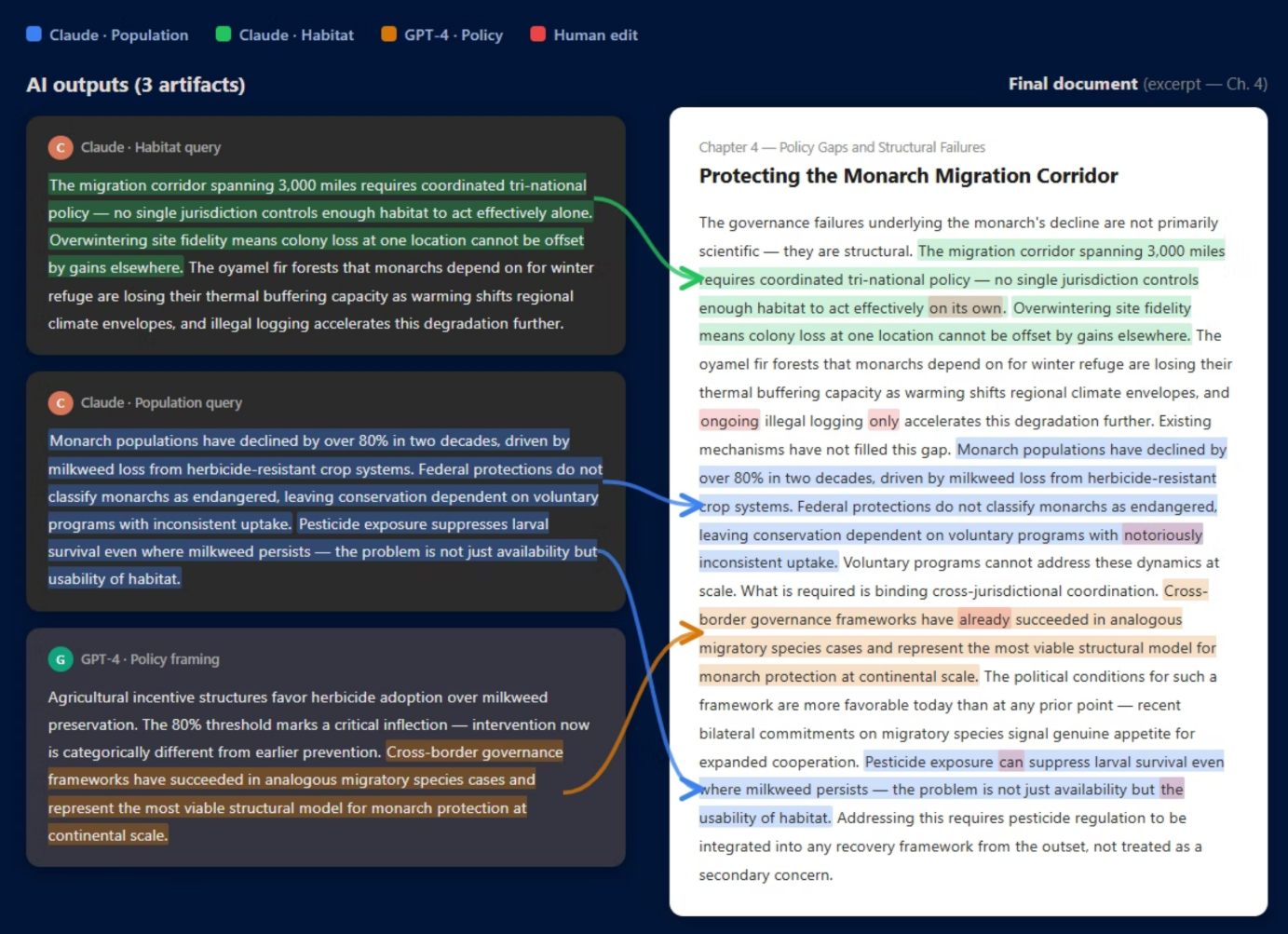

A software-based assertion mapping and targeted verification platform for AI-assisted research work, designed to trace how LLM-generated content flows into final outputs and to prioritize human review by logical dependency and structural risk, addressing longstanding limitations of detection-based approaches to AI accountability.